pre-commit hooks¶

Use pre-commit hooks for safer Hamilton code changes¶

This page gives an introduction to pre-commit hooks and how to use custom hooks to validate your Hamilton code.

What are pre-commit hooks?¶

A pre-commit hook is a script or command that’s executed automatically before making a commit. The goal of these hooks is to standardize code formatting and catch erroneous code before being committed. For example, popular hooks include ensuring files have no syntax errors, sorting imports, and normalizing line breaks.

Note that it’s different from testing, which focuses on the behavior of the code. You can think of pre-commit hooks as checks and formatting you would do everytime you save a file.

Add pre-commit hooks to your project¶

Hooks are a mechanism of the git version control system. You can find your project’s hooks under the .git/hooks directory (it might be hidden by default). There should be many files with the .sample extension that serve as example scripts.

The preferred way of working with pre-commit hooks is through the pre-commit Python library. This library allows you to import and configure hooks for your repository with a .pre-commit-config.yaml file.

Steps to get started¶

install the pre-commit library

pip install pre-commit

add a

.pre-commit-config.yamlto your repository# .pre-commit-config.yaml repos: # repository with hook definitions - repo: https://github.com/pre-commit/pre-commit-hooks rev: v2.3.0 # release version of the repo hooks: # list of hooks from the repo to include in this project - id: end-of-file-fixer - id: trailing-whitespace - id: check-yaml args: ['--unsafe'] # some accept arguments # download another repository with hooks - repo: https://github.com/psf/black rev: 22.10.0 hooks: - id: black

install the hooks defined in

.pre-commit-config.yamlpre-commit installNow, hooks will automatically run on

git committo manually run hooks

pre-commit run --all-files

Custom Hamilton pre-commit hooks¶

pre-commit hooks are great developer tools, but off-the-shelf solutions aren’t aware of the Hamilton framework. Hence, we developed a pre-commit hook to help you author Hamilton dataflows! Under the hood, they leverage the hamilton CLI, so if you are unfamiliar with it, feel free to install it and view the --help messages.

pip install sf-hamilton[cli]

hamilton --help

Checking dataflow definition¶

Hamilton doesn’t have many syntactic constraints, but there’s a few things we want to catch:

functions parameters and return are type annotated

a node consistently has the same type (e.g., a parameter in multiple functions)

functions with a name starting with underscore (

_) are ignored from the dataflowfunctions with a

@configdecorator received a trailing double underscore with a suffix (e.g.,hello__weekday(),hello__weekend())

Instead of reimplementing this logic, we can try to build the Hamilton Driver with the command hamilton build MODULES and catch errors. This also ensures the verification is always in sync with the actual build mechanism. This hook will help prevent us from committing invalid dataflow definitions.

Checking dataflow paths¶

A dataflow definition might be valid, but it might break paths in unexpected ways. The command hamilton validate (which internally uses Driver.validate_execution()) can check if a node is reachable.

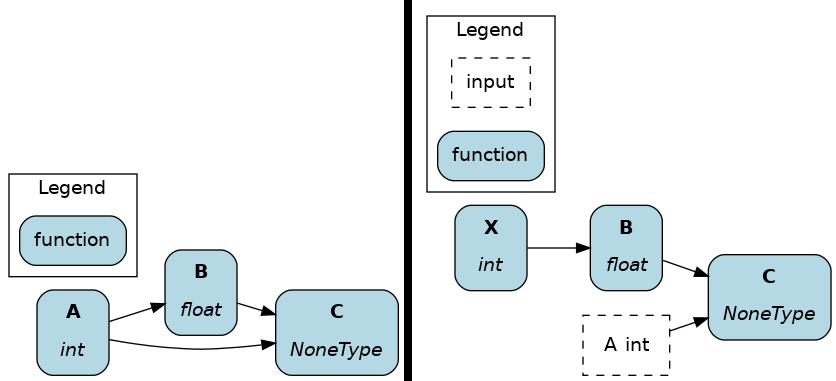

For example, take a look at my_module.py, which contains the nodes A, B, C, and the changes between v1 and v2.

# my_module.py - v1

def A() -> int: ...

def B(A: int) -> float: ...

def C(A: int, B: float) -> None: ...

# driver code

dr = driver.Builder().with_mdoules(my_module).build()

dr.validate_execution(final_vars=["C"]) # <- success

# my_module.py - v2

def B(X: int) -> float: ...

def C(A: int, B: float) -> None: ...

# driver code

dr = driver.Builder().with_mdoules(my_module).build()

dr.validate_execution(final_vars=["C"]) # <- failure. missing `A`

In v1, the dataflow could be validated for C without any inputs. Now, a developer made B depend on X instead of A and removed A. This change accidentally impacted C which now depends on the external input A. Note that both v1 and v2 have a valid dataflow definition. To catch breaking changes to the path to C, we could use hamilton validate --context context.json my_module.py with the context:

// context.json

{ "HAMILTON_FINAL_VARS": ["C"] }

// will call .validate_execution(final_vars["C"])

Note

pre-commit hooks can prevent commits from breaking a core path, but you should use unit and integration tests for more robust checks.

Add Hamilton pre-commit to your project¶

We alluded to the relationship between pre-commit hooks and the hamilton command line tool. In fact, the basic hook is designed to take a list of hamilton commands and will execute them in order when hooks are triggered.

To use them, add this snippet to your .pre-commit-config.yaml and adapt it to your project:

- repo: https://github.com/dagworks/hamilton-pre-commit

rev: v0.1.2 # use a ref >= 0.1.2

hooks:

- id: cli-command

name: Hamilton CLI command

args: [ # list of CLI commands to execute

hamilton build my_module.py,

hamilton build my_module2.py,

hamilton validate --context context.json my_module.py my_module2.py,

]

The above snippet would:

check the dataflow definition of

my_module.pycheck the dataflow definition of

my_module2.pyvalidate the execution path specified in

context.jsonfor dataflow composed ofmy_module.pyandmy_module2.py

You can pass any hamilton CLI command to the pre-commit hook, but it will only care about it succeeding or failing.